Hopefully I managed to get all the various increasing/decreasing pointing in the right direction. Without the 140-character limit, it's hard to stop typing, even if I try to just link and give a very terse explanation. But the interpretation is that the population is "learning" more and more about the stable state, until it achieves that state and knows all there is to know! Okay, you can see why tweeting is seductive. Witnesses claimed the strange substance killed plants and sickened people. In the late 1970s, after the end of the Vietnam War, many Vietnamese and Laotian people began noticing that a sticky yellow liquid periodically rained down from otherwise sunny skies. Since information is minus entropy, this is a Second-Law-like behavior. A depiction of a beekeeper’s hive from John Worlidge’s Vinetum britannicum (1678).

Then the take-home synthesis is this: if you are not in an evolutionarily stable state, then as your population evolves, the relative information between the actual state and the stable one decreases with time. Established in 1992, as a youth culture magazine, Entropy Magazine’s is a design driven magazine, whose philosophy is to discover new creative talent within the fields of design, art. An equilibrium configuration, we might say. Then there is something called an evolutionarily stable state, one in which the relative populations (the fraction of the total number of organisms in each species) is constant. That is: imagine that every member of the population breeds at some rate that depends on circumstances. Make the natural assumption that the rate of change of a population is proportional to the number of organisms in that population, where the "constant" of proportionality is a function of all the other populations. The second point has to do with the evolution of populations in biology (or in analogous fields where we study the evolution of populations), following some ideas of John Maynard Smith. The relative information between two distributions can be thought of as how much you don't know about one distribution if you know the other one the relative information between a distribution and itself is zero. (Aside to experts: I'm kind of shamelessly mixing Boltzmann entropy and Gibbs entropy, but in this case it's okay, and if you're an expert you understand this anyway.) John explains that the information (and therefore also the entropy) of some probability distribution is always relative to some other probability distribution, even if we often hide that fact by taking the fiducial probability to be uniform (.

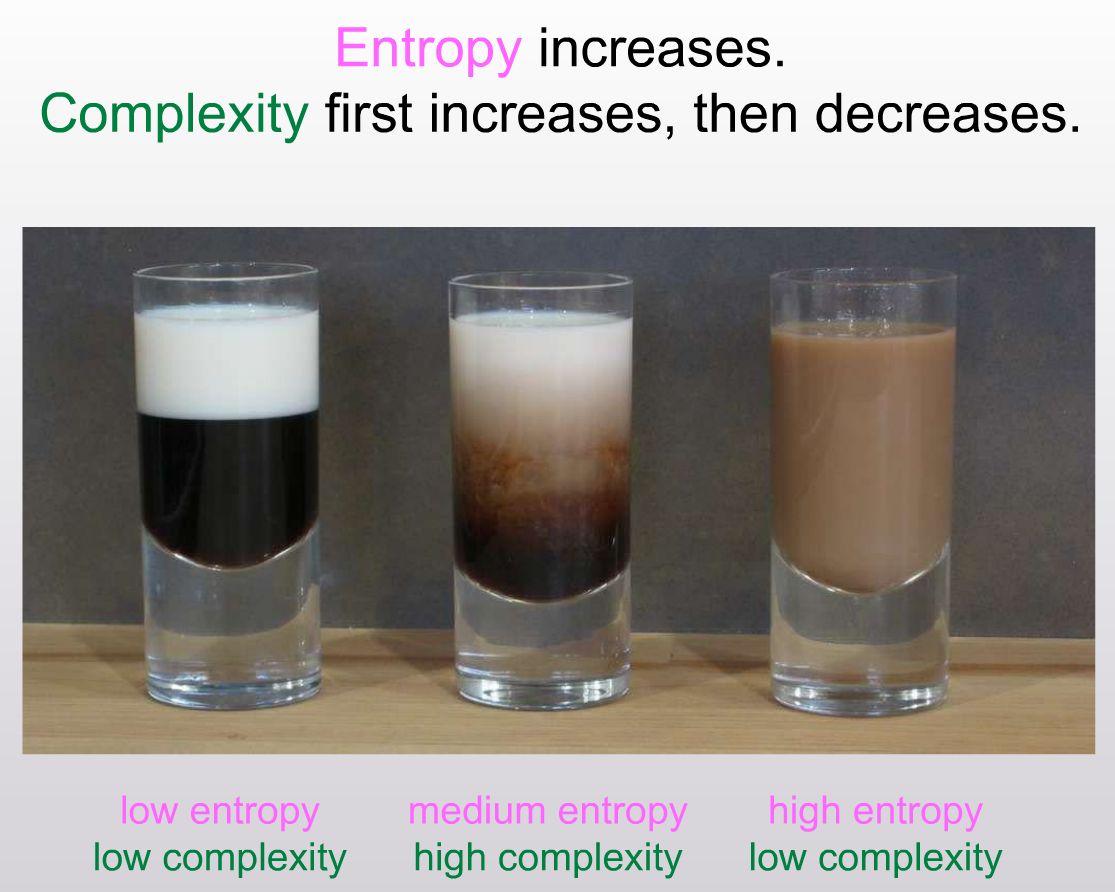

If it's high-entropy, there are many states that look that way, so you don't have much information about it. But if a provocative new theory is correct, luck may have little to do with it. Information can be thought of as "minus the entropy," or even better "the maximum entropy possible minus the actual entropy." If you know that a system is in a low-entropy state, it's in one of just a few possible microstates, so you know a lot about it. Popular hypotheses credit a primordial soup, a bolt of lightning and a colossal stroke of luck. It's the idea of "relative entropy" and its equivalent "information" formulation. The first is a bit of technical background you can ignore if you like, and skip to the next paragraph. She loves reading, and photography is also another big hobby of hers.Okay, sticking to my desire to blog rather than just tweet (we'll see how it goes): here's a great post by John Baez with the forbidding title "Information Geometry, Part 11." But if you can stomach a few equations, there's a great idea being explicated, which connects evolutionary biology to entropy and information theory. Oh how could I have been so lucky to meet youĪlanna Gray is 19 years old and has been writing since she was handed a pencil. The sound of my beating heart breaking out of rhythmįalling into entropy-How you have undone meĪ precious life made up out of oxygen and atoms Of all the beautiful words you painted me with While your fingertips leave burning lines on my armsĪ force tethering us together by a silver string The way I am beginning to fray at the seams Thoughts that hide at the edge of my subconsciousĪs I try to convey the feelings you evoke in me The only explanation for my scattered thoughts

The reason for my heart’s stuttering beats

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed